Post by : Anis Al-Rashid

Digital networks provide connection and information, but they also create new avenues for harm. In recent years, AI-generated "deepfakes"—synthetic images, audio and video that can appear authentic—have introduced fresh risks for online abuse.

When used maliciously, deepfake technology becomes a tool for targeted harassment. These falsified files can be highly convincing and are often deployed to intimidate, defame or humiliate individuals. While the technology has legitimate uses in media and education, its misuse raises urgent concerns for mental health professionals, regulators and social media operators.

Deepfakes are media items produced by machine learning systems that alter or fabricate images, voice recordings or video. Examples include swapping someone’s face into another body, creating audio that mimics a person’s speech, or manufacturing private-looking material.

Harassment using deepfakes is often personal and invasive. Common forms include:

Sexually explicit material created without consent.

Impersonation clips used to spread false claims or smear reputations.

Fabricated appearances or statements affecting professional or social standing.

The realistic nature of such content amplifies emotional harm and can leave victims feeling exposed and powerless.

People targeted by deepfakes commonly report anxiety, depressive symptoms and post-traumatic stress. The loss of control over one’s image or voice can produce ongoing stress that interferes with sleep, work and relationships.

Repeated or high-profile deepfake attacks can weaken trust in both personal connections and professional networks. Targets may withdraw from online life or avoid interactions for fear of further reputational damage.

When a person’s likeness or voice is distorted, it can undermine their sense of identity. This effect is especially harmful for adolescents and young adults who are still shaping their online and offline identities.

Standard responses to online abuse—such as reporting content or blocking users—often fall short with deepfakes because:

Manipulated material can spread quickly across multiple services.

Detecting sophisticated fakes requires specialized tools.

Shame or embarrassment may delay victims from seeking help.

Platforms have adopted rules and automated systems to identify and remove harmful deepfakes. User reporting features exist, and machine-learning models flag manipulation indicators, but attackers continually refine their methods.

Companies are investing in prevention through AI detection and user education. Typical steps include:

Restricting uploads flagged as manipulated and harmful.

Offering guidance to help users spot synthetic media.

Working with researchers and authorities to strengthen reporting systems.

Even with improvements, platforms must balance free expression with safety, scale protections for billions of accounts, and tackle the cross-platform circulation of harmful material.

Several jurisdictions have begun criminalising or regulating aspects of deepfake misuse, often under non-consensual explicit content, defamation and cyberbullying laws. Enforcement remains difficult because content can be shared anonymously and across borders.

Regulatory frameworks must reflect the specific threats of deepfakes, including:

The high fidelity that makes misidentification likely.

Rapid replication and wide online distribution.

Psychological and reputational harms that persist beyond initial exposure.

Policymakers, tech firms and mental health organisations should coordinate to:

Speed up takedowns and streamline reporting procedures.

Provide victims with legal help and mental health support.

Promote responsible AI development and safer platform design.

Mental health clinicians are increasingly screening for distress tied to digital harassment. Early recognition of trauma related to manipulated media can reduce long-term consequences.

Cognitive Behavioral Therapy (CBT): Supports victims in processing stress and restoring self-image.

Trauma-Informed Care: Emphasises safety, trust-building and empowerment for those affected.

Digital Literacy Education: Teaching recognition of manipulated media helps reduce helplessness.

Community groups, online forums and public information campaigns can help victims connect, access resources and lower stigma. Mental health providers can partner with tech firms to share coping tools and preventative advice.

Developers of AI media tools have a duty to foresee misuse and adopt safeguards such as watermarking, detection features and clearer user warnings.

In communities where reputation is central to social and economic life, deepfake attacks can have disproportionate impact. Women, public figures and marginalised people are often targeted more frequently.

Improving public understanding of synthetic media helps prevent victim-blaming and strengthens societal resilience against manipulated content.

Researchers are refining AI tools that identify inconsistencies in lighting, facial motion or audio cues to spot deepfakes. Progress continues as attackers evolve their techniques.

Some services are piloting proactive alerts, verification marks and digital labeling to help users distinguish authentic content from manipulated media.

Cooperation among tech companies, governments, academia and NGOs is essential. Shared databases, faster reporting channels and joint awareness campaigns can slow the spread and reduce the harm of deepfake harassment.

Deepfake misuse is likely to rise as AI capabilities advance. Effective mitigation will depend on:

Education and Awareness: Teaching at-risk groups how to spot and report fakes.

Legal and Regulatory Evolution: Updating laws to address unique AI-related harms.

Mental Health Support: Broader access to counselling, trauma-informed care and digital literacy programs.

Technological Safeguards: Better detection, prevention and governance tools on platforms.

As digital life deepens, policymakers, platforms and health services must balance innovation with protections for personal safety, ethics and psychological wellbeing.

This piece is intended for information and education only. It does not replace legal, medical or professional advice. Individuals affected by harassment should consult qualified mental health providers or legal authorities.

7 Everyday Practices for Natural Belly Fat Loss

Explore 7 everyday habits that help in burning belly fat naturally without drastic dieting. Simple s

The Compounding Effect: Transforming $5,000 into $120,000 Over Time

Learn how compounding can evolve a $5,000 investment into $120,000 through time and the right strate

Blood Sugar Testing: Morning vs After Breakfast – What You Need to Know

Explore when to check your blood sugar: fasting or post-breakfast for better health insights.

WhatsApp Experiencing Issues Today? Global Users Report Delays

WhatsApp users around the globe are facing message delays and issues. Discover the reason behind tod

Is Your Android Monitoring You? Disable These 6 Settings Immediately

Concerned about your Android's monitoring? Discover 6 essential settings to change now for better pr

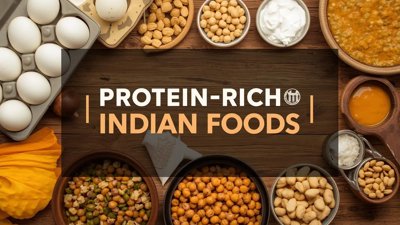

Boost Your Health with These 7 Protein-Packed Indian Foods

Explore 7 protein-rich Indian foods that can enhance your daily nutrition naturally and affordably.