Post by : Samir Qureshi

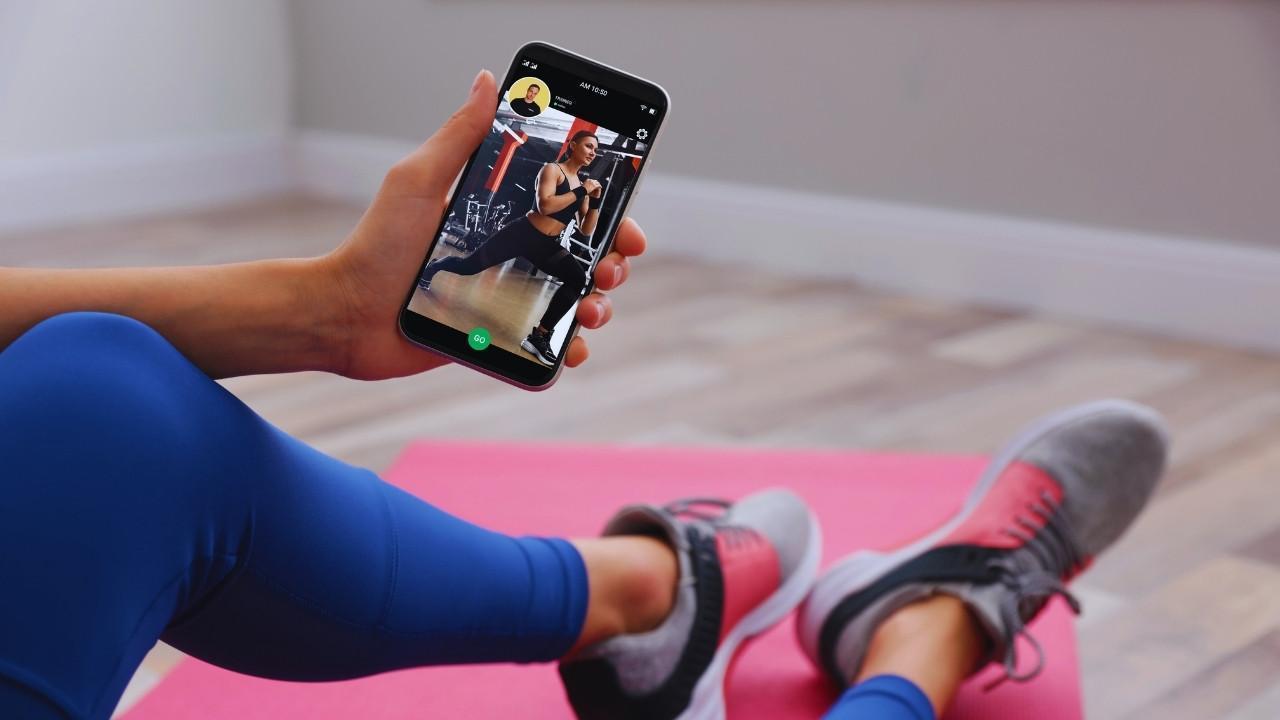

Health technology has moved well past simple step counters and diet trackers. Modern platforms combine continuous biometrics, telemedicine services and AI analytics to deliver tailored health guidance — a transition that accelerated after the pandemic as remote monitoring became mainstream.

Today’s wellness apps gather detailed physiological signals such as heart-rate variability, sleep stages, blood-oxygen levels and stress indicators. When linked to digital identities, these data form individual health records used to forecast risks, refine exercise plans and support chronic disease management.

Yet alongside these advances comes a pressing question: how much personal privacy do users surrender for convenience and insight?

Digital identity now underpins next-generation health services rather than merely serving as official ID. Governments and private providers are developing interoperable, secure digital ID systems to simplify access to medical records, prescriptions and insurance functions.

In states that deploy national health IDs, people can authenticate via biometrics or secure tokens to retrieve medical histories and speed up hospital processes. The benefits are clear: faster admissions, immediate record access and better AI-driven care planning — but this connectivity also increases exposure.

A breached digital ID could reveal more than credentials; it can expose full medical histories, genetic details and sensitive psychiatric records. Because healthcare data breaches are among the costliest, protecting digital identities is increasingly seen as a matter of public health.

Wellness platforms depend on large volumes of user information to provide personalization. Many users, however, do not fully grasp what they share or how that information is monetized. Third-party brokers and advertisers may repurpose de-identified health signals for targeted marketing, creating ethical concerns.

This creates a privacy paradox: consumers value personalization but often oppose sharing sensitive data. Some apps take advantage of this tension by embedding data-sharing clauses deep within long terms of service agreements.

Regulatory movements in the EU and North America are pushing for "privacy by design" in digital health, demanding greater transparency and user control over data storage, usage and sharing — steps intended to restore individual agency.

AI drives many advanced features in wellness apps, from fertility forecasts to sleep disorder screening. These systems learn from user inputs and biological markers to generate personalized recommendations.

However, continuous monitoring raises ethical flags: the boundary between beneficial health insights and pervasive surveillance can be thin. Models trained on skewed datasets may misread signals for certain ethnicities or genders, amplifying bias.

To counter these risks, regulators and researchers press for transparency, explainability and human oversight, including independent audits of algorithms to ensure fairness and reliability.

The global wellness sector — estimated at over $5 trillion — rests on consumer trust. Whether offering mindfulness services, personalized nutrition or AI coaching, vendors rely on the integrity of user data.

Some companies now emphasize transparent data controls, stronger encryption and anonymization as market differentiators. Apps that clearly explain their data practices are positioning themselves as reliable lifestyle partners rather than mere tools.

This trend marks a shift: successful health-tech firms will need to combine convenience with demonstrable ethical practices and robust user protections.

Policymakers worldwide are updating rules to address the digital health surge. The EU’s AI Act and Digital Services Act set influential standards for AI use and data governance in wellness platforms.

In the United States, HIPAA continues to adapt to challenges from cloud-based systems, while countries in Asia and the Middle East are crafting regional approaches to data ownership and security in health tech.

These regulatory efforts reflect a consensus: innovation must be paired with governance to build a trustworthy ecosystem where users can adopt technologies without fear.

App-based therapy and mindfulness tools have improved access to mental health support, but they also raise confidentiality concerns. Some platforms retain chat logs or mood-tracking data that, if exposed, could harm users.

Experts recommend applying data-minimization: collect only essential information and give users clear control over data deletion or export. Transparency and informed consent are essential to preserve trust in digital mental-health services.

Interoperability — seamless data exchange among devices, apps and medical records — promises a fuller picture of patient health. Integrated inputs from wearables and records could help flag early signs of conditions such as hypertension or sleep disorders.

But more connected systems mean more potential attack vectors. Securing a patient-controlled architecture, with clear consent mechanisms and security-first design, will be critical as the sector evolves.

Blockchain offers a model for decentralizing health data and granting users encrypted control. Smart-contract verification for access requests can reduce unauthorized changes and improve auditability.

Estonia’s implementation of blockchain in e-health is an example of increased transparency. While not a cure-all, decentralized frameworks could form a resilient layer for protecting digital health identities.

Despite tech and regulatory progress, user knowledge remains central to data safety. Many people undervalue the sensitivity of metadata such as step counts, sleep hours or stress markers.

Public education, ethical branding and awareness campaigns are needed to cultivate informed users. As demand for accountability grows, companies will need to move beyond minimal compliance to proactive ethical standards.

The future of health technology hinges on balancing innovation with privacy protections. As digital IDs become gateways to care, developers, regulators and users share responsibility for safeguarding personal information.

AI and big data can transform early detection and everyday wellbeing, but progress must be shaped by human-centered design that values autonomy, security and fairness.

When digital health advances responsibly, it can change not only how bodies are monitored but how wellbeing is understood — as a combination of individual control, safe systems and collective benefit.

This article is for informational purposes only. It does not constitute medical, legal, or financial advice. Readers should consult qualified professionals before making decisions related to healthcare, technology use, or data protection.

Is Your Android Monitoring You? Disable These 6 Settings Immediately

Concerned about your Android's monitoring? Discover 6 essential settings to change now for better pr

Boost Your Health with These 7 Protein-Packed Indian Foods

Explore 7 protein-rich Indian foods that can enhance your daily nutrition naturally and affordably.

Comprehensive Breakdown of Family Living Costs in Dubai: Monthly Expenses, Rent, and Education

Explore what a small family can expect to spend in Dubai monthly, covering rent, schools, groceries,

Top High-Income Skills You Can Learn at Home

Discover high-income skills such as writing and digital marketing that can boost your earnings while

The Hidden Impacts of Daily Breakfast Skipping on Your Body

Learn how skipping breakfast can significantly impact your energy, mood, and overall health in every

Wired vs Wireless Charging: Assessing Speed and Battery Impact

Explore the differences in speed and battery health between wired and wireless charging to make an i